URL targeting optimization with CQEngine

URL targeting in ad serving industry

In the ad serving industry, URL targeting is an effective mechanism that enables advertisers to specify URLs (web pages) on which their ad can be served. Subject of targeting can be specific URL or group of URLs. It all depends on logic behind one of many types of rules that can be used in order to match URLs to demands of advertisers. Depending on the choice of rule, its matching logic can be applied just on the domain section of URL or entire URL.

Based on advertiser requirements there are 5 most frequently used rules in order to achieve matching:

- START_WITH:

- `https, start_with, url` – all https pages,

- `example, start_with, domain` – all domains that start with the word ‘example’.

- END_WITH:

- `.edu, end_with, domain` – all pages where domain ends with ‘.edu’.

- EXACT:

- `example.com, exact, domain` – all pages where domain is `example.com`.

- CONTAIN:

- `example, contain, url` – all pages with `example` as a part of URL,

- `example, contain, domain` – all pages where domain contains `example`.

- PATTERN:

- `example.com/*/post`, pattern, url` – all pages with URL that matches “example.com/*/post” pattern.

Challenges

Often there is a need to find all predefined rules that are matching the presented URL, so we are able to associate additional serving constrictions.

Here, we arrive at the initial challenge where multiple rules must be matched to the presented URL through a complex set of operations.

From the previously mentioned set of possible rules, CONTAIN and PATTERN rules belong to more complex ones. If we take in consideration that the total set of rules easily grows over time and can reach a few hundreds of thousands of rules if not millions in the entire ad serving logic, it is logical that a fast and optimized engine that will perform matching is a must. This is the only way to guarantee stability and performances of application overall. From previous experience with Apache Lucene and positive results that came from its implementation it was an obvious starting point of resolving matching challenges that was just described.

Apache Lucene

By definition, Apache Lucene is a high-performance, full-featured text search engine library written entirely in Java. It is a technology suitable for nearly any application that requires full-text search.

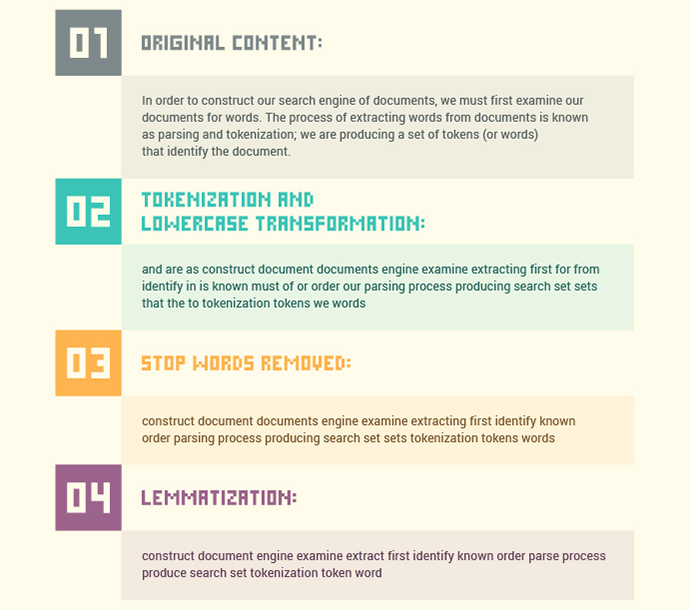

Lucene uses an inverted index behind the scene in order to optimize full text search. To enable this, an inverted index must exist prior to initialization of search and is a result of a complex set of operations that must take place. Initial document is tokenized, spliced on a more basic part called tokens. After this, tokens are additionally processed in order to be reduced. Process converts capital letters to lowercase and takes out “stop” words (words with high frequency of occurrence) etc. Example of this process is shown below on Figure 1.

In the end, every processed word/token is associated with the document where it is located. This is a defined pipeline through which an inverted index is created. This way, by performing search just on defined reverse index structure, a search time is drastically shortened in comparison with traditional full text search on all documents.

If described procedure through which Lucene performs searches is translated to requirements of URL targeting from the beginning of this article, we can see that in this case rules are Lucene documents while formed query directed to Lucene is actually an URL. However in order to use Lucene in an optimized way things must be put in reverse. We should index incoming queries aka rules and use it on document aka URL. Lucene does not support this on older versions. Implementation is available only from version 8.2.0 with Luwark library.

Figure 1. Document processing example

Luwark is a high performance stored query engine based on the Lucene. It allows definition of a set of search queries and then monitor a stream of documents for any that might match these queries.

Luwark requires usage of appropriate Monitor object which represent collection of predefined queries (MonitorQuery) and InputDocument object which is a document used for matching. Basic usage looks like this:

Monitor monitor = new Monitor(new LuceneQueryParser("field"),

new TermFilteredPresearcher());

MonitorQuery mq = new MonitorQuery("query1", "field:text");

List<QueryError> errors = monitor.update(mq);

// match one document at a time

InputDocument doc = InputDocument.builder("doc1")

.addField(textfield, document, new StandardAnalyzer())

.build();

Matches<QueryMatch> matches = monitor.match(doc, SimpleMatcher.FACTORY);

If this approach is used as solution of original problem, Monitor represents collection of predefined matching rules (start with, ends with, contains etc.) while InputDocument is either URL or domain on which matching is performed.

After the initial test (with a couple of thousands of test rules), the resulting response time was way out of our desired interval that was acceptable (below 10 ms). Biggest impact in this case was coming from usage of CONTAIN and PATTERN matching operations. Beside this, searching and matching operations inside Lucene are organized around usage of tokens and adequate indexing that can largely influence search precision that must value each character equally, even special characters.

Further investigation brought us to the final solution in form of Radix Tree structure, which resolved the problem of search precision all the way to singular character. This line of problems and resulting additional investigations for satisfying solutions brought us to CQEngine.

CQEngine to the rescue

CQEngine (Collection Query Engine) is a high-performance Java collection which can be searched with SQL-like queries, with extremely low latency. It solves the scalability and latency problems of iteration by making it possible to build indexes on the fields of the objects stored in a collection, and applying algorithms based on the rules of set theory to reduce the time complexity of accessing them.

Indexes can be created using on-heap persistence, off-heap persistence or disk persistence. In our case, we chose on-heap persistence (default one), because of performance benefits. On-heap persistence is by far the fastest, followed by off-heap persistence, and then by disk persistence. There is no need for deserialization of data associated with matching rules. One thing should be kept in mind: On heap persistence can have an impact on total heap size.

CQEngine provides a wide range of implemented indexes for all types of described persistence techniques. In our case points of interest were on heap indexes, and some of them are HashIndex, RadixTreeIndex, ReversedRadixTree, InvertedRadixTreeIndex etc.

RadixTree is a tree data structure which allows values to be looked up based on prefixes of the keys with which they were associated, as well as based on exact matches for keys. A radix tree essentially allows “equals” and “starts with” lookup.

ReversedRadixTree is a tree data structure which allows values to be looked up based on suffixes of the keys with which they were associated, as well as based on exact matches for keys. A reverse radix tree essentially allows “equals” and “ends with” lookup. This has the same structure as a radix tree, but this tree reverses characters in the keys added to the tree, and reverses characters supplied for queries. This is not the same as a suffix tree – a suffix tree stores all possible suffix substrings of keys (“contains” lookup). This tree only stores the original key in reverse character order.

InvertedRadixTree is an extension of RadixTree which is set up to scan external documents for keys previously added to the tree, rather than for data contained in the tree itself. This method of scanning external documents for keys (or keywords) can be significantly faster than repeatedly scanning the same document for each and every keyword using contains(CharSequence) provided through Java API on the document. Time complexity is O(d) rather than O(dn), where d = length of document and n = number of keys / keywords. An inverted radix tree essentially allows “equals”, “in”, “isPreffixOf” and “isContainedIn” lookup.

Engine on itself provides a predefined set of queries like equal, in, startsWith, isPrefixOf, isContainedIn, etc. This initial set is not easily extendable because of internal mechanisms that provide functionality of the engine.

If we refer to the initial set of rules from the beginning of this article it is noticeable that the START_WITH rule, that is used for matching of string to beginning of url or domain, is translated to isPreffixOf query defined in the engine itself. Similarly to this CONTAINS rule can be translated to isContainedIn query, EXACT rule translates to equal query while END_WITH and PATTERN rules doesn’t have properly matching implementation inside the engine.

In order to mitigate potential problems with heap memory it is desirable to use only a single index. From everything said above it can be concluded that InvertedRadixTree provided optimisation for the best part of required queries that were needed (3 out of 5).

Further on by observing implementation of ReversedRadixTreeIndex, because of reversed order in which keys are stored, END_WITH rule could be implemented by usage of this index with few required adaptations. This was done by reversing the order of characters defined in rule itself. During matching operations we reversed the order of character is urls and domains provided for matching as well. By executing this set of adaptations we were in position to use predefined isPrefixOf query and its optimisations provided by InvertedRadixTreeIndex.

When it comes to remaining PATTERN rule, things were not as simple as in previous cases. CQEngine provides matchesRegex query which can be used for this purpose however we decided to implement new query (patternMatches) that was meant to provide only wildcard support (“*” pattern) for matching in order to achieve better performances. Additionally PATTERN rule can partially be implemented as CONTAINS rule. If we would split PATTERN rule by “*” pattern, first part of the newly splitied set of strings could be indexed the same way as it is done with CONTAINS rule. Additionally isContainedIn query could be used now in order to reduce the set of results that require additional check that corresponds to the patternMatches query.

Finally because InvertedRadixTreeIndex does not support newly created query it was necessary to modify existing implementation in order to enable addition of new query as well as the support for indexing of PATTERN rule.

Enough of theory…give me some code

Rules from beginning of this article will be represented by PlacementRule class, where:

- enum Mode mark rule type,

- enum Scope mark weather rule should be applied to url or domain,

- value describes characteristic that must be checked,

- data – additional data associated with rule.

@Data

@AllArgsConstructor

public class PlacementRule {

private Mode mode;

private Scope scope;

private String value;

private Set<String> data;

public enum Mode {

START_WITH,

END_WITH,

CONTAIN,

PATTERN,

EXACT

}

public enum Scope {

DOMAIN,

URL

}

}

CQEngine needs to access fields inside objects, so that it can build indexes on fields, and retrieve the value of a certain field from any given object. It does not use reflection to do this, instead it uses attributes. An attribute is an accessor object which can read the value of a certain field in a POJO. So let’s define attributes for our values which we want to be indexed.

private static final SimpleAttribute<PlacementRule, String> RULE = new SimpleAttribute<>() {

@Override

public String getValue(PlacementRule rule, QueryOptions queryOptions) {

return rule.getValue();

}

};

private static final SimpleAttribute<PlacementRule, Mode> MODE = new SimpleAttribute<>() {

@Override

public Mode getValue(PlacementRule rule, QueryOptions queryOptions) {

return rule.getMode();

}

};

Now that we have our attributes, we can create IndexedCollection:

Collection<PlacementRule> rules = …

rules.stream()

.peek(PlacementTargetingImpl::prepare)

.collect(groupingBy(PlacementRule::getScope))

.forEach((scope, collection) -> {

IndexedCollection<PlacementRule> indexedCollection = new ConcurrentIndexedCollection<>();

indexedCollection.addIndex(HashIndex.onAttribute(MODE));

indexedCollection.addIndex(CustomInvertedRadixTreeIndex.onAttribute(RULE, PlacementTargetingImpl::adjustWhileIndexing));

indexedCollection.addAll(collection);

indexes.put(scope, indexedCollection);

});

What the previous code block executes is logic that will group PlacementRules by Scope. Simplified it means we will separate rules that are targeting only domains from rules directed toward urls. Next, a necessary IndexedCollection will be created and used in order to add appropriate indexes: HashIndex for MODE attribute which is used in order to decrease number of checkups for some PlacementRule and CustomInvertedRadixTreeIndex on RULE attribute. As a result PlacementRule search is optimised when matching is performed for url/domain.

Also here, during usage of adjustWhileIndexing custom implementation:

indexedCollection.addIndex(CustomInvertedRadixTreeIndex.onAttribute(RULE,

PlacementTargetingImpl::adjustWhileIndexing));

a proprietary logic is active that provides optimization for PATTERN placement rule. As described previously, during indexing of this rules, pattern is splitted by “*” and only the first part of the splitted string is indexed.

private static String adjustWhileIndexing(String value, PlacementRule rule) {

if (rule.getMode() == PATTERN) {

int from = value.charAt(0) == '*' ? 1 : 0;

int index = value.indexOf("*", from);

if (index > 0) {

return value.substring(from, index);

}

}

return value;

}

Additionally during interaction through PlacementRules we modified END_WITH rules order in reverse..

private static void prepare(PlacementRule rule) {

String value = rule.getValue();

if (rule.getMode() == END_WITH) {

value = reverse(value).toString();

}

value = value.toLowerCase();

rule.setValue(value);

}

Note: It is important to emphasize that IndexedCollection provides functionality through which we can add new values in index later, as well as remove already existing ones.

Now that we have created IndexedCollection with proper indexes for optimization, the search procedure is rather simple. Also, CQEngine has predefined logic operators like OR, AND in order to simplify writing of more complex queries.

protected Set<String> findAll(Scope scope, String document) {

IndexedCollection<PlacementRule> index = indexes.get(scope);

if (index == null || document == null) {

return emptySet();

}

document = document.toLowerCase();

ResultSet<PlacementRule> results = index.retrieve(or(

and(mode(EXACT), isEqualTo(document)),

and(mode(END_WITH), isSuffixOf(document)),

and(mode(CONTAIN), isContainedIn(document)),

and(mode(START_WITH), isPrefixOf(document)),

and(mode(PATTERN), patternMatches(document))

));

return results.stream()

.map(PlacementRule::getData)

.flatMap(Collection::stream)

.collect(toCollection(LinkedHashSet::new));

}

Note: isSuffixOf method creates isPrefixOf query but in order that is reversed from original character order.

OK, let’s check statistics

Initial assumption was that in the end we could expect everyday execution and application of up to 500K of placement rules. In order to be ready for any situation we created initial tests with more than 1 million of placement rules.

In order to achieve desirable performances, indexing of placement rules was scheduled at the beginning of application deployment. As the entire indexing took just a couple of seconds, total performances were satisfactory.

For 1 million of placement rules total heap consumption was around 1GB which was more than acceptable in our case.

In the end, average speed for search was under 10 ms for search requests. Initially this latency was around 40 ms but it decreased rapidly (spinning up of JVM).

Summarize:

| For 1.000.000 placement rules Indexing: ~ 3 s Heap: ~ 1 GB Speed: < 10 ms |

Conclusion

In this article we hoped to describe all the problems from multiple aspects and conclusions that led us to CQEngine as the optimal solution for Url targeting. We described a minimal set of required features in order to achieve the desired targeting solution. For more details we suggest official documentation.

Biggest surprise during the entire process of research and implementations was modularity of the entire CQEngine that enabled us fast and simple intervention inside predefined set of features, so we could adapt them for our in house usage. End result was an average execution time of 10ms with a heap footprint of 1GB which was excellent considering the amount of data that was actively processed and searched.

In all use cases where fast iteration and optimized search is a must, CQEngine could easily be recommended. From it’s simple mechanism for querying, that can leverage from previous developer experience in SQL, modular architecture that makes it easily adaptable in various situations and versatility of its indexing strategies with addition of persistence capabilities, makes CQEngine well rounded solution, with flexible application in multiple environments and for various demands.

Written by

Marko Stijak

Software Developer